AI Impact Report: Q1 2026

A quarterly report sharing insights from 400+ companies integrating AI into daily engineering work.

Welcome to the latest issue of Engineering Enablement, a weekly newsletter sharing research and perspectives on developer productivity.

📅 Live research readout: Sign up here to join Abi for a readout and interactive discussion about the impact of AI on engineering velocity.

Since releasing our Q4 2025 AI Impact Report, reasoning models have improved, agentic workflows have gained traction, and teams are finding new ways to integrate AI into how they build.

Each quarter, we analyze the prior quarter’s data to benchmark AI adoption rates, productivity impact, and how teams are using these tools. For this edition, we expanded our dataset by over 40% more data points to give you a more representative picture.

We’ve compiled this analysis into our AI-Assisted Engineering: Q1 2026 Impact Report, which we are releasing today.

The full report covers industry adoption rates (now at 93%), tooling benchmarks, and the growing risks of “Shadow AI.” You can read the full report here. Below are two previews of interesting shifts we’ve observed since last quarter.

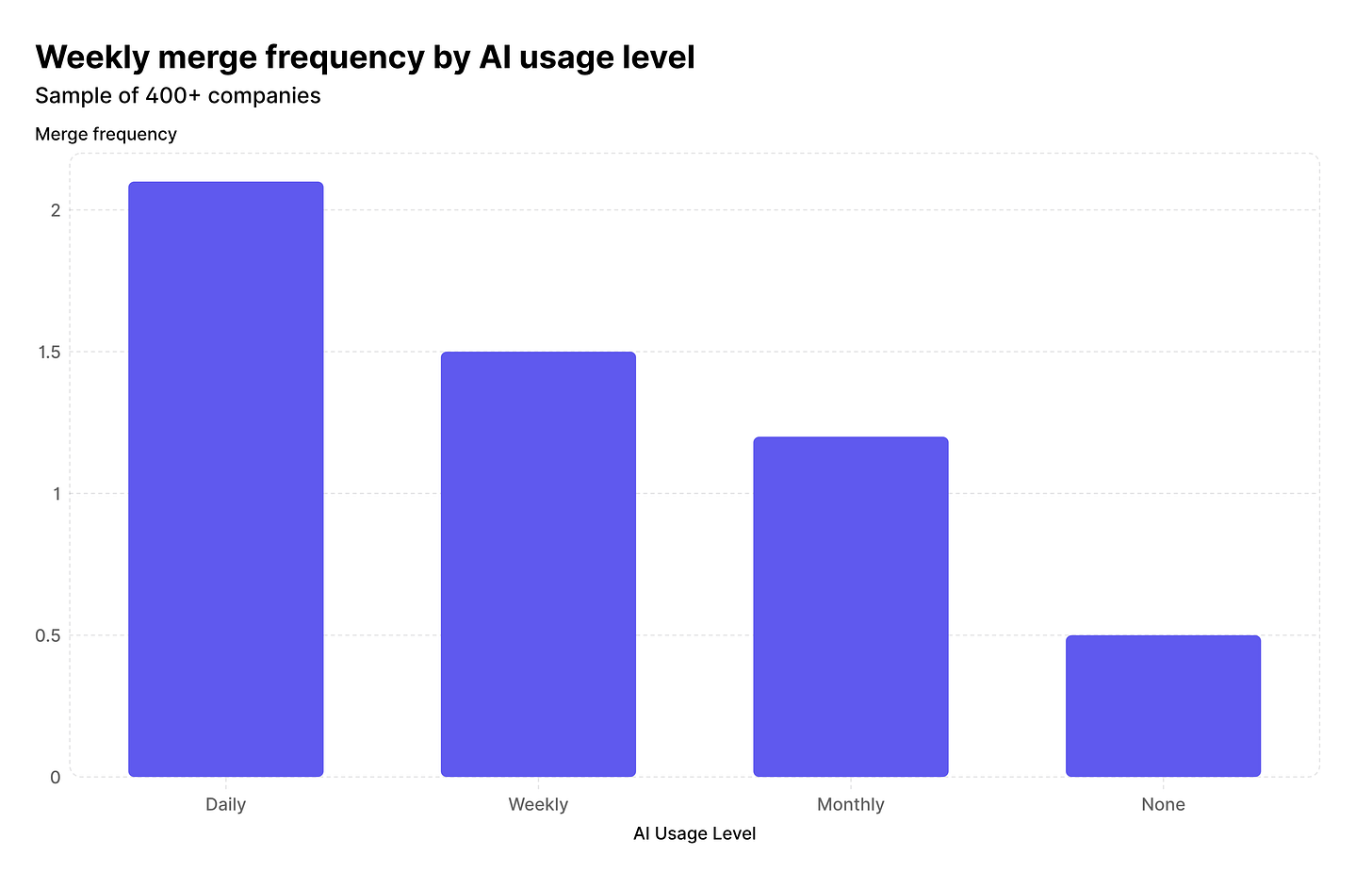

Engineering managers are shipping 4x more code than six months ago

Perhaps the most dramatic structural shift we observed is the changing nature of the engineering manager role.

In Q4, we noted that engineering managers who used AI daily were shipping twice as many pull requests over the period of time the sample was taken. That figure has doubled again: engineering managers are now shipping 4x as much code.

Why this matters: The definition of a developer is expanding. As agentic tools and AI assistants drastically lower the barrier to context-switching and boilerplate generation, managers are finding it far easier to get back into the codebase without sacrificing their leadership responsibilities. Coupled with an industry-wide trend of flattening organizational structures, AI is enabling the true return of the player-coach, allowing EMs to stay technically sharp and contribute directly to the product.

The question then is: if EMs can meaningfully contribute code again, should org structures evolve to reflect that? From what we’re hearing, it’s top of mind for many leaders, but most are still in “watch and learn” mode. No one we’ve spoken to has made structural changes based on this shift yet, but we’ll continue to monitor this trend.

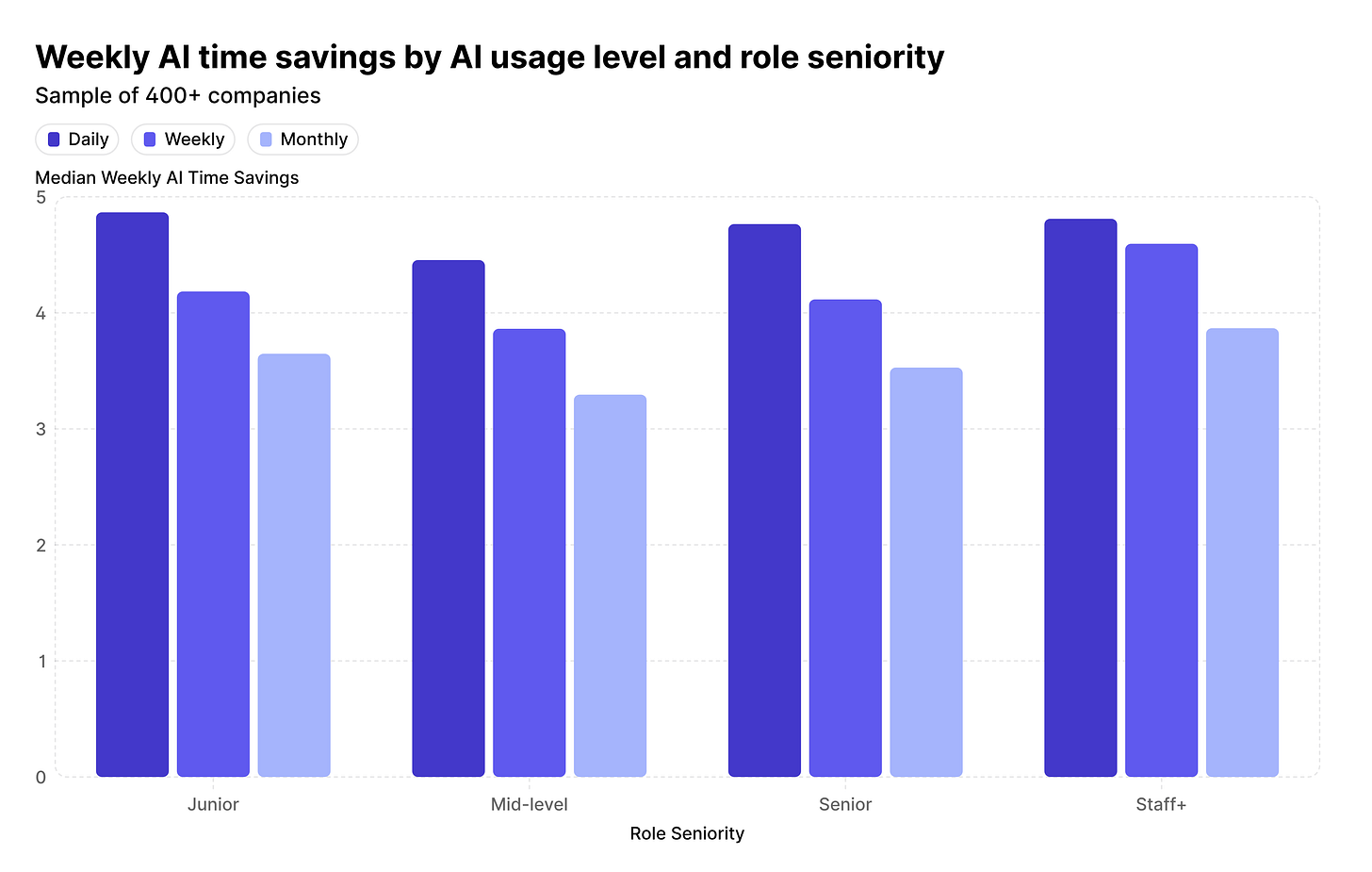

Junior engineers now save more time with AI than Staff+ engineers

In our Q4 2025 report, Staff+ engineers who used AI daily were regaining more time than any other tenure band. Just three months later, the data tells a different story.

Junior engineers who use AI daily are now saving 4.9 hours per week, edging out daily Staff+ users who are saving 4.8 hours.

While time savings alone doesn’t tell a full story—in fact, this shift should come with heightened attention to quality, maintainability, and security—it is interesting to see this change in work impact among junior engineers.

Why this matters: Staff+ engineers, who naturally spend more time mentoring and reviewing rather than writing net-new code, are still seeing benefits, but junior engineers have officially taken the lead in total time saved. We provide a look at AI’s impact on quality in the full report; we’ll continue to pay close attention to quality measures amongst junior developer cohorts in the future.

What else is in the report?

The full Q1 2026 report provides comprehensive data and visual benchmarks on:

Rust-specific improvements: How improvements in model reasoning and agentic loops have caused Rust to leapfrog other modern languages in AI-driven time savings.

Quality volatility: Why some companies are seeing change failure rates swing by 2% (a 50% increase in defects), and why automated testing is no longer optional.

The shadow AI risk: How developers are bypassing enterprise guardrails, and why acceptable use policies are critical for ensuring safe use of AI

Small vs. large enterprises: Why companies with fewer than 200 developers are outpacing large enterprises in efficiency gains.

Final thoughts

As always, a reminder: there is no “average” experience with AI impact. Looking at industry trends and averages like those in this report can help contextualize your performance and do some pattern-matching, but I want to caution against using these averages as a guide to what your performance should look like. The truth is that AI impact is very asymmetrical and looks very different for each organization. Some are doing very well when it comes to throughput, but quality is suffering; some have done a great job of using AI for migrations, but are struggling to incorporate AI in testing and release processes. Averages abstract away the nuances of each company’s transformation journey. The best way to use this information is to enhance—not replace—your own metrics and AI strategy.

This week’s featured DevProd job openings. See more open roles here.

Cashea is hiring an Infrastructure & Developer Productivity Platform Engineering Manager | Remote

BNY is hiring an SVP, Application Development Manager | Pittsburgh, PA

Figma is hiring a Staff Software Engineer, Developer Experience | Remote; US

Leidos is hiring a Platform Engineer | Remote; US

Reddit is hiring a Staff Software Engineer, Developer Experience | Remote; US

Weave is hiring a Senior Platform Engineer, Data Infrastructure | Remote; US

That’s it for this week. Thanks for reading.