AI productivity gains: More modest than expected

Findings and discussion from DX's longitudinal study across 400+ companies.

Welcome to the latest issue of Engineering Enablement, a weekly newsletter sharing research and perspectives on developer productivity.

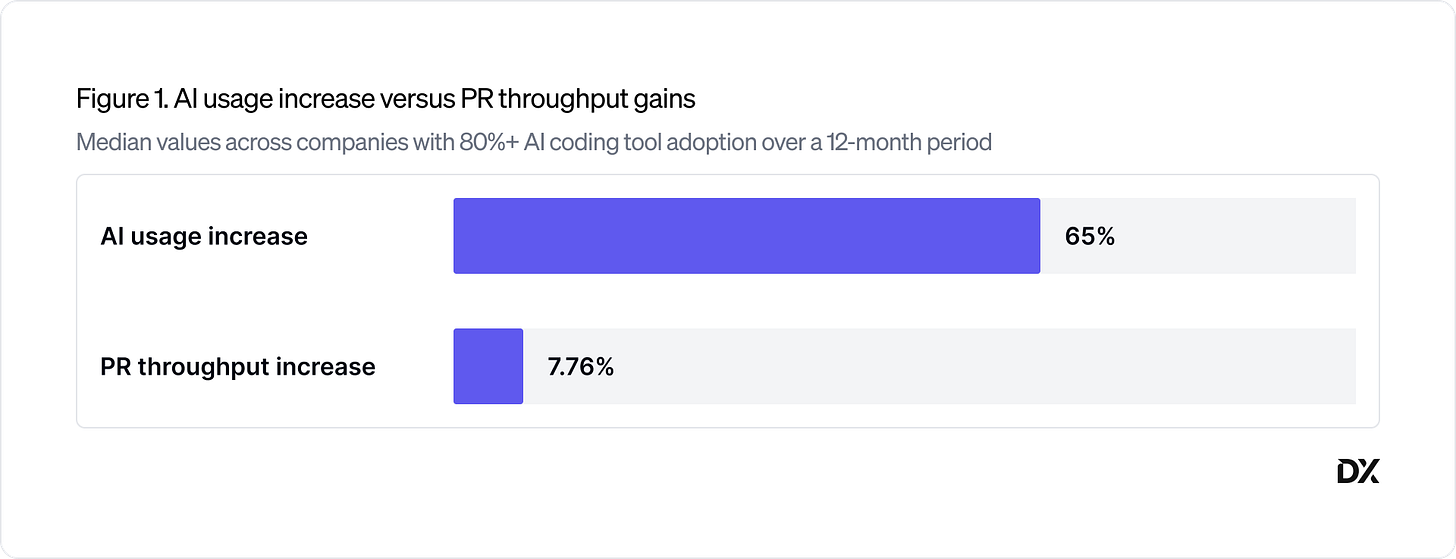

Over the past 16 months, DX has been running a longitudinal study on AI’s impact on engineering velocity across a sample of more than 400 engineering organizations. We found that as AI tool usage increased by an average of 65%, median PR throughput increased by just under 8%. Most organizations are landing in the 5–15% range—a meaningful gain, but far below the 3x or 10x expectations many leaders are being held to.

That gap raises some questions. Why aren’t the gains higher? Where is reclaimed time actually going? And how should leaders be thinking about where to invest next?

This is what Brian Houck and I discussed to kick off the mainstage program at the inaugural DX Annual, which brought together roughly 500 senior engineering and developer productivity leaders for a day focused on navigating the AI era. Brian is a well-known developer productivity researcher, and someone we’ve referenced often in this newsletter—from past interviews with him (here and here) to summaries of papers he has co-authored (like this one and this one). In this conversation, Brian asked me about the study methodology and what sparked this research, as well as what’s limiting gains, how to measure AI’s impact, and how leaders can unlock greater gains.

Today we’re releasing the full report. You can download it here: AI and Engineering Velocity: A Longitudinal Analysis.

What follows is a lightly edited transcript of my conversation with Brian at DX Annual.

Brian: To kick us off, Abi—you’re sitting on one of the largest datasets on how engineers work in the real world. This new report digs into what’s happening as AI adoption scales across the industry. Before we get into the findings, what motivated this research?

Abi: Thanks, Brian. A little background on this study: Nearly every conversation I’ve had with folks over the past few months has started the same way. My CEO, our executives, are expecting astronomical gains because there’s so much hype about AI in the media, but that’s not what we’re seeing on the ground. How do we close that gap or set realistic expectations? How do we even know what to aim for?

I’d bet everyone reading this has experienced that to some extent. So at DX, we started asking ourselves the same question. What can we see in the data about what companies are actually seeing? That’s what we’ve been digging into. This is just the beginning; there’s a lot more to unpack and a lot of nuance, but at least we now have a first look at what’s happened over the past 16 months as AI adoption has matured across companies.

Brian: I’d love to nerd out on research methodology for a minute. Walk us through how DX actually investigated this. How did you design the study and decide which companies to include?

Abi: Our customer network at this point is about 500 companies. For this study, we took a sample of those. We looked for companies that had reached a point of maturity in their developers’ adoption of AI: we defined that as over 75% monthly active usage of AI coding tools. In almost all cases, there’s been a significant rise in adoption over the past 12 months. We also narrowed the scope to companies with over 100 engineers and excluded companies that had gone through major liquidity events, M&A, IPOs, or regulatory changes that could have had an impact.

In terms of methodology, it’s really hard to single out causality. What is AI and what are confounding factors? One approach I see a lot is cross-sectional analysis: comparing developers who use AI to developers who don’t. There are flaws in that approach because oftentimes the developers using AI were already the ones coding the most. They always look better.

What we did instead is look at longitudinal data at the organizational level. As AI adoption has matured, what has been the impact on organizational velocity and throughput?

Brian: What were your hypotheses, and what did you actually find?

Abi: As a developer myself, I had some gut-feel hypotheses, but honestly, from a study standpoint, we really didn’t know. We were pushing the team, asking “what’s the answer?” We wanted to know just as much as you probably do.

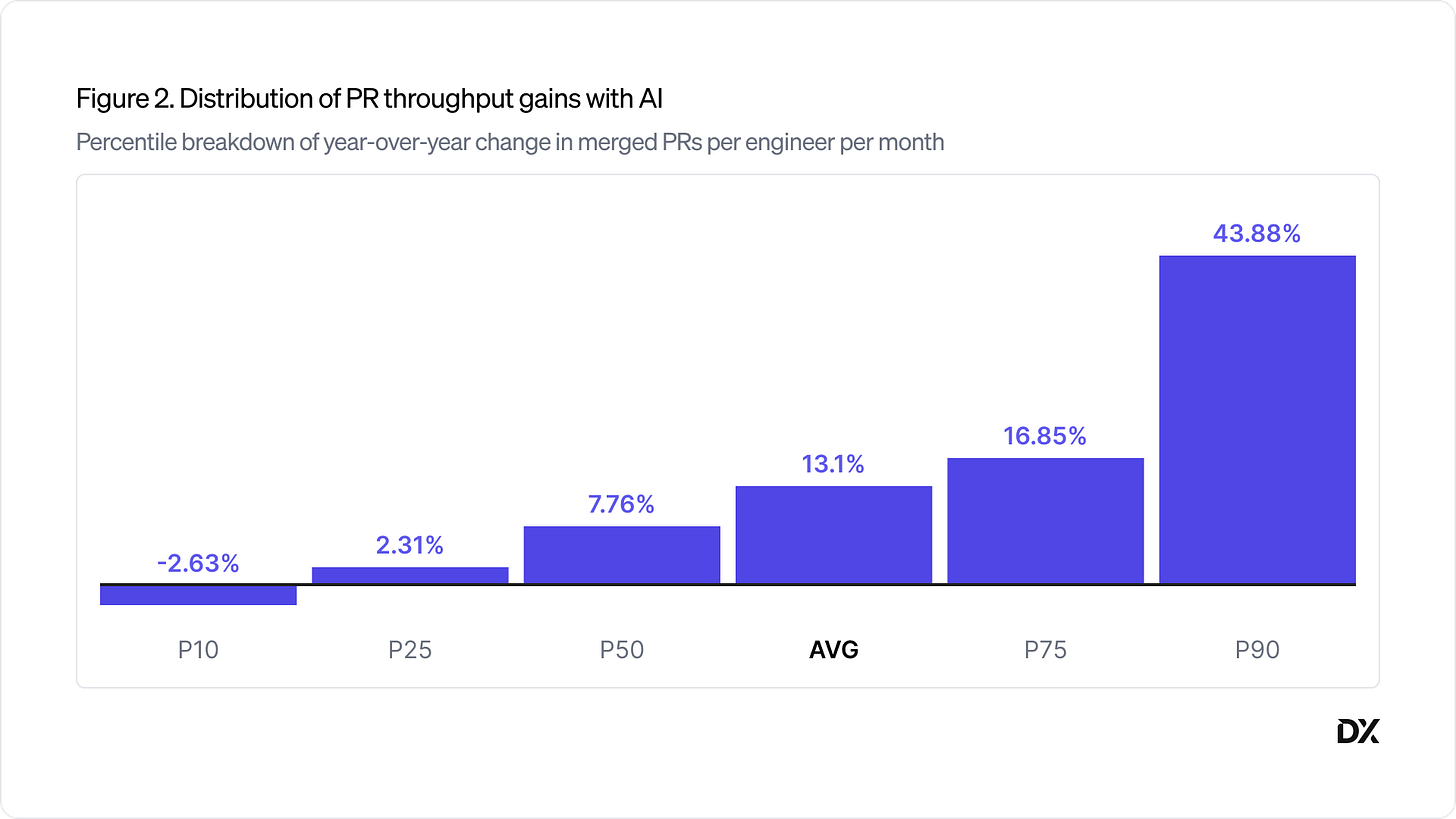

What we ultimately found is that most organizations fall in the 10-15% range in terms of PR throughput increase. The actual median was around 8%, the mean was around 11%. So a real gain, but well below what most leaders expect.

It’s much more modest than many CEOs, who are talking to their CEO friends about the 10x gains they’re supposedly delivering, would expect. In our engagements with companies and customers, it wasn’t completely surprising. There are a lot of factors underlying that, which we’ll get into.

Brian: One of the central themes of the SPACE framework is that developer productivity is nuanced and about much more than counting activities. So given that, why did you choose PR throughput as your primary measure?

Abi: Measuring velocity or productivity is inherently challenging. We did look across a number of different metrics, including the full spectrum of SPACE and the DX Core 4. But for what we wanted to focus on and publish, we felt PR throughput was the most relevant and practical right now, mostly because that’s what we see most organizations talking about and focusing on. We wanted to meet the world where it’s at.

We also explored our proprietary metric TrueThroughput, which uses AI to weight each PR by relative size and complexity so it takes some of the noise out. We liked that signal, but again, for relevance and practicality, PR throughput is a more accessible metric. We have more data on other metrics and how those have been affected, and we’ll eventually publish those as well.

Brian: I suspect that, for many, a 5%, 7%, 10% increase in PR throughput matches what they’ve been feeling. But as you mentioned, when I talk to business leaders, they’re often expecting 20, 30, 40, 50% increases in productivity. So why do you think the gains are lower than what people outside the industry might expect?

Abi: Our team conducted follow-up interviews to really dig into that question. There were a number of factors. These are just the top themes, not the full list.

The top one probably wouldn’t surprise most of us: coding is not the primary bottleneck for engineers. Brian, at Microsoft, you published a study on where engineering time is spent, and only about 14% of developer time is spent coding. It’s a very small part of where our time and money goes. So if AI is only optimizing that slice, it partially explains why the enterprise velocity gains are limited.

We also heard how AI tools and new automation introduce new bottlenecks. Things like review burden, technical debt, cognitive debt—which is a newer concept we can talk about. We also heard about challenges with adoption—a lot of social friction and cultural clashes that are inhibiting full adoption. There were a few more, but those were the major themes.

Brian: You don’t have to go far out in the distribution before you see some organizations with substantially higher gains. Any time the median and mean are that different, it implies outliers. So what set apart some of the companies with disproportionately high gains from the more typical experience?

Abi: We don’t have a great answer to this yet. Our first focus was on why the gains aren’t higher. The next question is: for those where gains are higher, why?

There are two parts to how we want to approach that. One is that we’re working to tease out whether the companies at the top of the range are seeing real, material gains or superficial, inflated numbers. We want to peel back the onion.

Two, assuming the gains are real, we want to understand what strategies and factors are driving those results. I think a lot of it boils down to what I’ve seen anecdotally: an all-in culture, a fully bought-in organization with centralized rollout and championing of AI tools. It’s more of a cultural shift toward infusing AI into the entire SDLC, not just coding, with an aligned push to drive adoption.

Brian: That aligns with some recent research I published showing that even something like leadership advocacy significantly increased the gains people saw from AI. You said something earlier that I think is really important and often misunderstood: software engineering is so much more than coding. Coding may be central to our identity as developers, but it’s only about 14% of our day.

Brian: One of the things I’ve been seeing in my research is that, yes, we’re producing 5, 7, 10, 15% more pull requests, but we’re doing it substantially more efficiently. For hands-on keyboard time spent coding, we’re producing about 40% more PRs per hour. That hints that we are reclaiming some coding time. Do you have any idea where that time is getting reinvested?

Abi: This has been a similarly perplexing question. For the past six months, I’ve heard customers asking: we’re seeing meaningful self-reported time savings from developers, but we’re not seeing that show up in our output and throughput. Where’s the disconnect? Where’s that time going?

We don’t know yet—this requires more research. But one preliminary hypothesis is that if developers only spend 14% of their time coding, then it makes sense that the time they’re recouping is ratably distributed across their activities. Only 14% of the time savings go back into coding, which would explain why we don’t see one-to-one output gains mapping to time savings.

Another hypothesis ties back to why the gains aren’t higher: some of the time savings come with side effects that require more time, like overseeing the work, QAing it, reviewing it. There’s also the lag factor: what do developers do while they’re waiting for agents to produce the code?

So those are some of the hypotheses, but we don’t have a clear answer yet.

Brian: There’s a reason we don’t just measure lines of code as a good measure of productivity. Writing more code isn’t always the right answer. As we increase velocity, what are some of the potential unwanted side effects you’ve been seeing?

Abi: Quality and cost are the two I hear customers talking about constantly. Cost is something we’re all trying to wrangle or at least get visibility into. Quality is something we all understand is an underlying risk, but I think it’s still early days. We did see Amazon go very public with this, but there’s some delay between the technical debt we may be creating and the consequences that follow.

The biggest thing I’ve been talking to customers about is a cultural risk I’ve been calling false velocity. I see this in so many organizations right now. And to some extent, even developer productivity leaders can fall into this trap, being so focused on showing off how much faster and more prolific we are with AI, without asking whether it’s leading to meaningful improvement.

You probably have engineers in your organization showing off really impressive things they can do with Claude or whatever tool. But are we asking: is your team’s product velocity actually increasing? Leaders are talking about how many more PRs and lines of code they’re generating, but is their roadmap actually accelerating? Is the quality of their products and code sustainable? There’s a real risk of being so focused on demonstrating what we can do that we’re not paying attention to whether we’re actually getting better—whether we’re materially improving our businesses.

Brian: So what’s your recommendation to engineering leaders who want to apply AI beyond just code generation? Where should they be looking?

That’s the biggest question I’m hearing from leaders right now. A lot of folks are at a point where they’ve rolled out one or more popular coding tools. Adoption is in a fairly satisfactory place, and they’re asking: what next? Especially if you’re sitting at that 10% number and asking how to get further.

The things I’m hearing about and seeing are, first, looking left and right of code. We’ve accelerated coding to some extent, but if that’s only 14% of where our time and money goes, what about the other 86%? How do we accelerate and optimize that?

Second, beyond thinking about the SDLC, there’s the question of how we improve the developer experience more deeply. Things like deep work and documentation—the friction points developers experience. How can we leverage AI to materially improve those?

And the third theme is this idea—there are many names for it—autonomous engineering, async engineering, background agents. I think today most of us are focused on leveraging AI to accelerate human work. Humans are still in the cockpit, steering and monitoring the work of the agents. The question is: how do we complement that acceleration with augmentation? Meaning more autonomous agents that are truly augmenting your human workforce and working in parallel, not just underneath your developers. Those are the three big themes.

Brian: You and I are both huge fans of Dr. Margaret-Anne Storey’s work. She’s been talking a lot about cognitive debt and the human cost of these AI transformations. What have you been looking at in that area?

Abi: I’m a big fan of that research. I think it falls under what I was talking about earlier in terms of risks and the time delay; we’re not always thinking about what the consequences might be 12 months from now if we adopt certain ways of working.

The loss of human understanding of the systems we’re building is a really interesting risk. There are different takes on how material it is. Some people argue it doesn’t matter if humans don’t deeply understand the systems, because they can use AI to quickly regain that understanding when needed. Others argue: no, if you need to call the mechanic, they should have mastery over the machine. I don’t think we know yet, but it’s a valid question.

Brian: We’ve hinted at it throughout this conversation—are we actually delivering innovation faster, or are we just moving bottlenecks around? My first reaction to an unanswered question is always: how do we measure it? Any thoughts on how leaders should be thinking about measurement as AI transforms how we work?

Abi: Our approach to measurement—some things stay the same and some things need to evolve. How we think about the overall software organization, things like quality and velocity, I think those stay the same. And especially if we’re trying to understand how things are changing with AI, you need consistent measures pre, post, and during this transformation.

But there are also new tools, new ways of working, new workflows being born every week. Measuring these new ways of working requires new approaches.

I’ll touch on two things. One is that I think increasingly there’s a need to separate how you’re measuring and framing AI’s impact. You should be thinking about acceleration—how much faster are our humans—as one bucket. And augmentation—how much more capacity are we generating with agents—as a second bucket. Thinking of those as two separate components in your overall formula is useful.

The question of how to measure agents is especially interesting. We’ve been exploring this idea of agent hourly rate: the human-equivalent hours an agent delivers, divided by its cost. That gives you a return-on-investment figure you can compare to human hourly rate, which is an interesting comparison point.

Something really new—not even in our minds last September—is this idea of agent experience. In the same way DX was founded on the idea of measuring developer effectiveness by going to developers and getting signal from them, we’ve applied a similar approach to agents. How do you measure AI agent effectiveness? You go to the agents and get feedback from them on where their bottlenecks and constraints are. We’ve just rolled that out. We’re surveying agents, which is pretty wild.

Brian: That is wild. To wrap up our conversation, give me one quick finding. What’s one thing you’ve learned about making agents more effective?

Abi: You have to ask them, just like we ask our developers. It’s really early days, but we’ve initially begun measuring four factors. For example, we ask the agents: how were the requirements you were given? How easily were you able to understand the codebase you were working in? How well were you steered by the human you were pairing with? We get quantitative metrics from that as well as qualitative feedback—the agent explains where it had challenges or bottlenecks. That’s the idea.

Brian: I love it—the same principles of measurement apply. That is unfortunately all the time we have, but I think we covered a lot of ground.

Abi: A special thank you to Brian, and everyone who attended DX Annual. Stay tuned for the session recordings, coming soon.

This week’s featured DevProd job openings. See more open roles here.

Cashea is hiring an Infrastructure & Developer Productivity Platform Engineering Manager | Remote

BNY is hiring an SVP, Application Development Manager | Pittsburgh, PA

Figma is hiring a Staff Software Engineer, Developer Experience | Remote; US

Leidos is hiring a Platform Engineer | Remote; US

Weave is hiring a Senior Platform Engineer, Data Infrastructure | Remote; US

That’s it for this week. Thanks for reading.