The AI-native developer

Findings from a new study on how AI is reshaping the developer role.

Welcome to the latest issue of Engineering Enablement, a weekly newsletter sharing research and perspectives on developer productivity.

Hi everyone 👋 I’m Brian - I’ve recently joined DX as an Applied Scientist. I previously led research for EngThrive, Microsoft’s company-wide initiative for measuring and improving developer experience. I’ve been part of this newsletter before and am now excited and honored to be a regular contributor.

For my first issue, I’m summarizing a new paper I co-authored with Rudrajit Choudhuri, Eirini Kalliamvakou, and Thomas Zimmermann, published in ACM Queue: The AI-Native Developer.

In this study, we explore how AI is reshaping software engineering by analyzing survey data from over 1,300 developers and interviews with 22 AI-fluent practitioners. We specifically look at what developers want from AI and how AI fluency develops, and discuss three possible futures for the developer role.

What developers actually want from AI

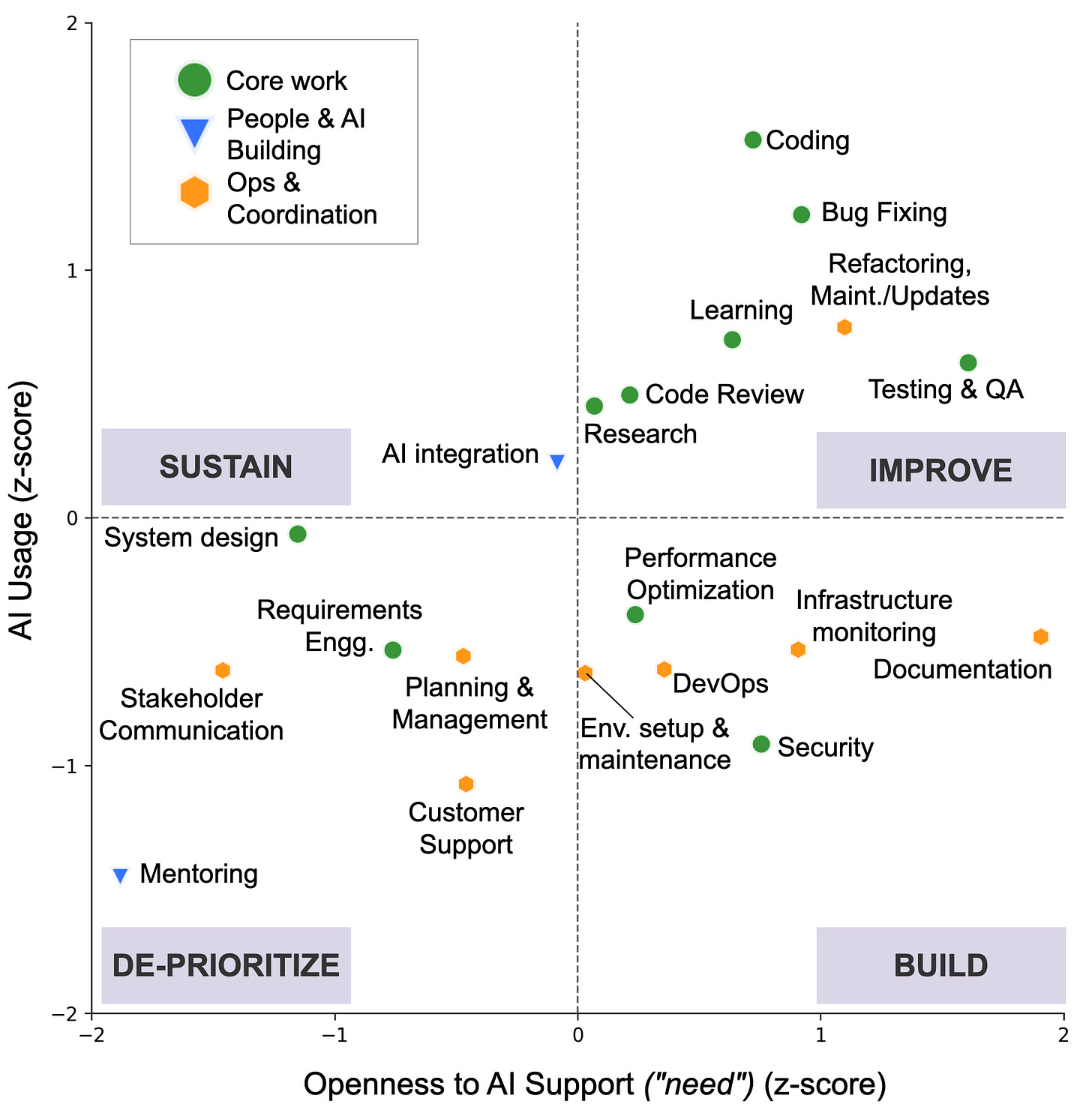

To understand where developers want AI involved—and where they don’t—we mapped their daily work across four dimensions: value, identity, accountability, and demands. This revealed three clusters. Core Work (coding, debugging, system design, testing) scored high on all four. Ops & Coordination (DevOps, documentation, stakeholder communication) scored high on value and demands, but weakly on identity. People & AI Building (mentoring, AI feature integration) scored moderately across the board, with relatively strong identity alignment.

These clusters map onto what we call an “AI opportunity space”, a plot of developers’ openness to AI support against their actual AI usage. Core Work and Ops & Coordination tasks both landed in the Build and Improve zones. Developers were open to deeper AI assistance for everything from cross-artifact debugging to environment provisioning, but reported that current tools hadn’t caught up. This suggests the barrier is less reluctance and more trust.

For production-facing work, developers consistently emphasized predictability, transparency, verifiability, and human oversight.

The De-prioritize zone was the most revealing. Relational work like stakeholder communication and customer interactions, landed here consistently. Developers saw little role for AI beyond preparation. They wanted to retain final voice and responsibility.

How AI fluency actually develops

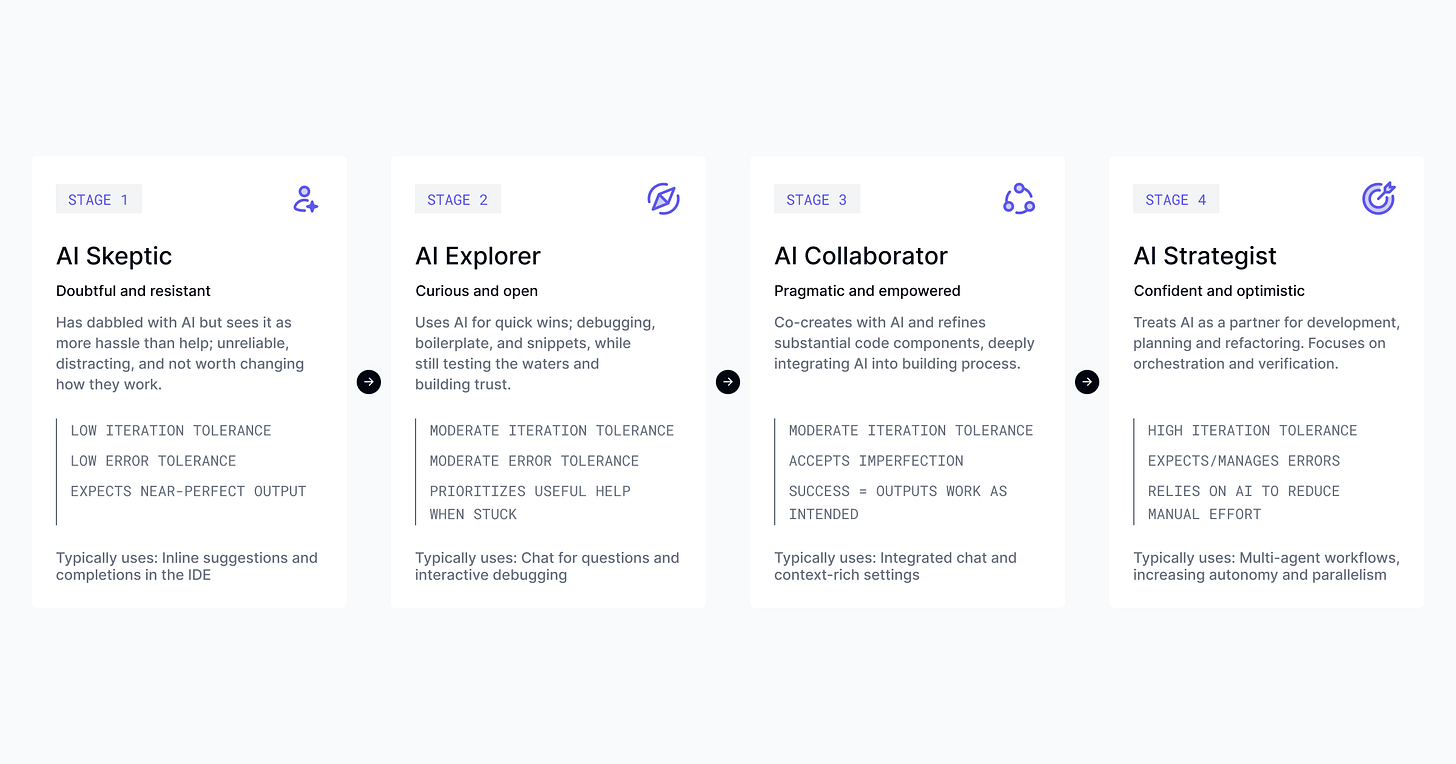

In our interviews, we observed a consistent four-stage journey from initial resistance to full orchestration.

The Skeptic starts with rational resistance. For skeptics, AI suggestions interrupted flow and created extra work. What shifted skeptics wasn’t persuasion; it was urgency. Developers saw AI fluency becoming a competitive advantage and pushed through the frustration. One put it plainly: “Either you have to embrace the AI, or you get out of your career.”

The Explorer emerges when AI starts to genuinely help. A cryptic error decoded, a familiar task automated, mundane work handled. Each small win chips away at skepticism.

The Collaborator integrates AI into the workflow earlier rather than using it only as a fallback. They treat iteration as part of the workflow rather than a recovery mechanism when AI output fails.

The Strategist orchestrates multiple agents in parallel: one defining test cases, another implementing, a third reviewing for security. They set context, review output, and decide what to delegate next. Strategists don’t just prompt, they front-load context. Some even have the AI “interview” them to surface missing requirements and business goals before a single line of code is generated. They have moved from being code producers to architects of intent. Ask them about the future, and they’ll tell you AI will write 90–100% of code within two years. What’s striking is that this prospect energizes rather than threatens them.

The tensions that don’t have easy answers

Even the most optimistic strategists we interviewed raised concerns.

The deepest was what we call the learning paradox. Mid-career developers wondered whether they were getting better at software engineering or “just better at prompting.” What does mastery look like when the skill is orchestration rather than implementation?

Deskilling was a related concern. Senior developers learned their craft through years of writing, debugging, and refactoring code. If junior developers delegate from day one, what foundational skills might they skip? Several interviewees questioned whether current onboarding approaches would prepare juniors for AI-augmented work or leave them dependent on tools they don’t fully understand.

Accountability runs underneath both. Developers put their names on code they didn’t directly write. Review and verification become the primary mechanisms for maintaining understanding and accountability, but as velocity increases, can verification norms keep pace, or will the pressure to ship quietly erode the guardrails that make delegation safe?

Three futures, and the choices that decide which we get

The paper closes by extending current patterns into three plausible futures.

Human craft at AI speed. Developers consciously choose to remain hands-on, using AI as an accelerator rather than a substitute. Tasks compress, but the shape of the work remains recognizable.

Orchestration and blended work. Software engineering is defined less by writing code and more by orchestrating intelligent systems and exercising judgment. Verification, mentoring, and design decisions take primary place; implementation gets delegated.

The Clerical Coder. In the paper’s dystopian future, AI generates all production code. Humans rubber-stamp at an industrial scale, accountable for outcomes they don’t fully understand. This is the rise of the “Software Signatory”, where you are rewarded for the volume of approvals rather than the depth of your craft. As the paper warns: If we reward speed without craft, we get volume without depth. When failures happen, the human reviewer is blamed.

There is no single trajectory here. Different teams will land in different places depending on their context and incentives. The outcomes are shaped by what organizations choose to reward and protect.

Final thoughts

AI adoption has increased, and the gap between how developers actually work and how they want to work has not closed. If anything, it has widened.

That suggests the framing of AI as a productivity tool—one that reduces toil and frees up time for meaningful work—may be too simple. The tasks developers find tedious aren’t necessarily the ones they trust AI to handle. And the tasks they trust AI to handle aren’t necessarily the ones creating the gap.

The more useful question for leaders isn’t “what can we automate?” but “are our tools earning the trust to touch what developers find meaningful?” and “what about the work is worth protecting?”

In case you missed it, we just released the DX Annual recording library. You can watch the recordings by clicking through the Sessions page here. We’ll release recordings on the podcast soon as well, stay tuned.

That’s it for this week. Thanks for reading.